GenAI and the explosion of software created by it.

As a veteran in the world of Mendix I am often asked: if generative AI is dramatically increasing the speed of software development, why do I need Mendix anymore?

As AI tools become more capable, this becomes a reasonable question to ask: if applications can be generated automatically, do structured low-code platforms like Mendix become less relevant?

While some tasks are being sped up, what it is not increasing is our ability to control what we build.

And that gap is where the answer to the question lies.

According to McKinsey, generative AI may accelerate certain engineering tasks by 20–45%, and Gartner predicts that AI-assisted coding will soon become standard practice across enterprise teams. The productivity shift is real and measurable.

But productivity and control are not the same thing.

In practice, the opposite is happening. The faster we build, the more structure and control we require. Independent security research has found that a significant proportion of AI-generated code contains security flaws — nearly half of generated code examined in some studies was found to contain vulnerabilities. (Veracode research, July 31, 2025)

Acceleration Without Constraint

Across many organizations, GenAI is being introduced incrementally. Developers experiment with AI-generated components. Prototypes move into production faster. Delivery cycles shorten.

Initially, this feels like pure progress.

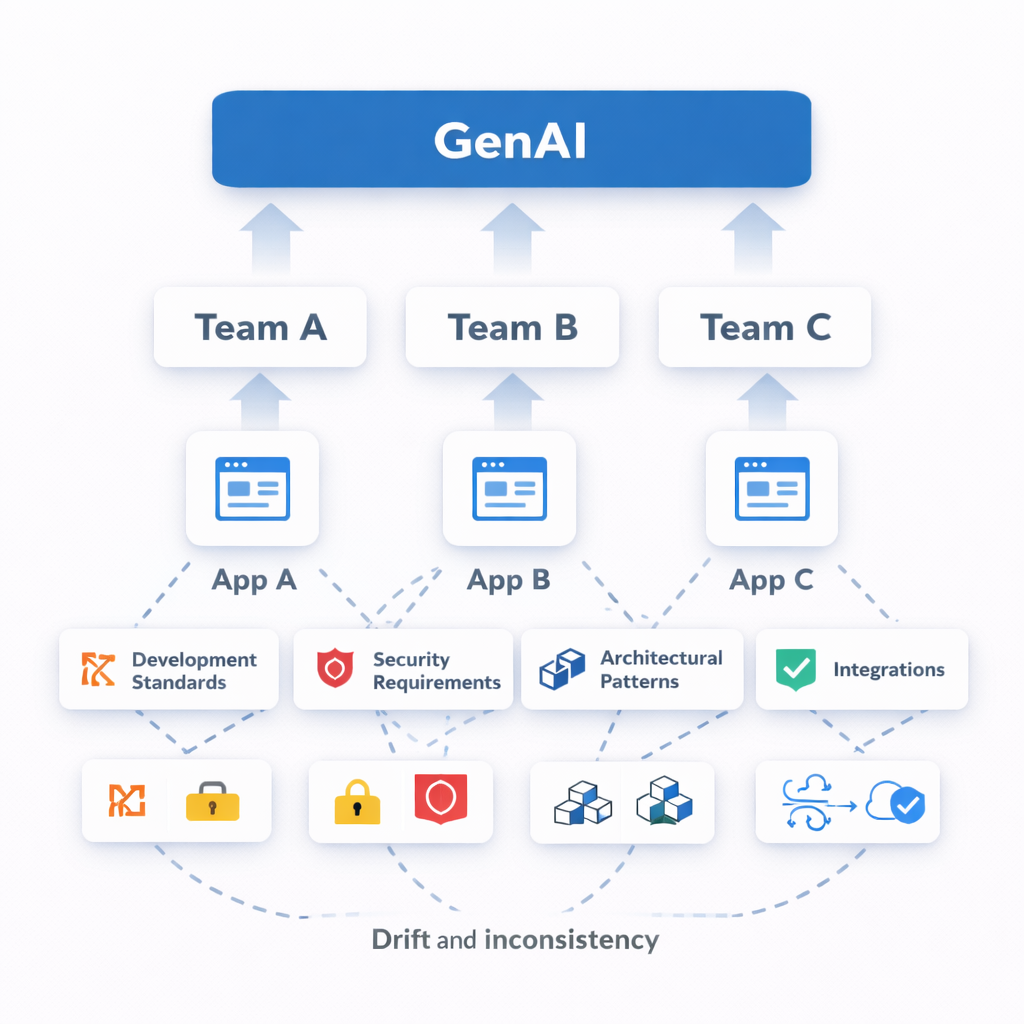

Over time, however, subtle inconsistencies begin to accumulate. Similar integrations are implemented in slightly different ways across teams. Business rules evolve independently. Data models diverge. Security configurations are embedded in generated logic without explicit validation against enterprise standards.

Nothing breaks immediately. But coherence degrades.

Acceleration without structural constraint does not scale innovation. It scales entropy. And in the world of software, entropy means risk and technical debt.

Use of GenAI without structural and platform guardrails like Mendix create entropy: architecture, development standards, security requirements, and integrations drift and become hard to manage.

What Generative AI Does Not Enforce

Generative AI produces plausible output. It looks good doesn’t it? It does not however enforce system-wide consistency across dozens of applications. It does not institutionalize reuse. It does not design enterprise architecture. It does not guarantee adherence to internal control frameworks or regulatory requirements.

Enterprise software depends on constraint. Constraint stabilizes domain models, governs change impact, and preserves maintainability over time.

This is precisely why Mendix remains essential in an AI-accelerated environment.

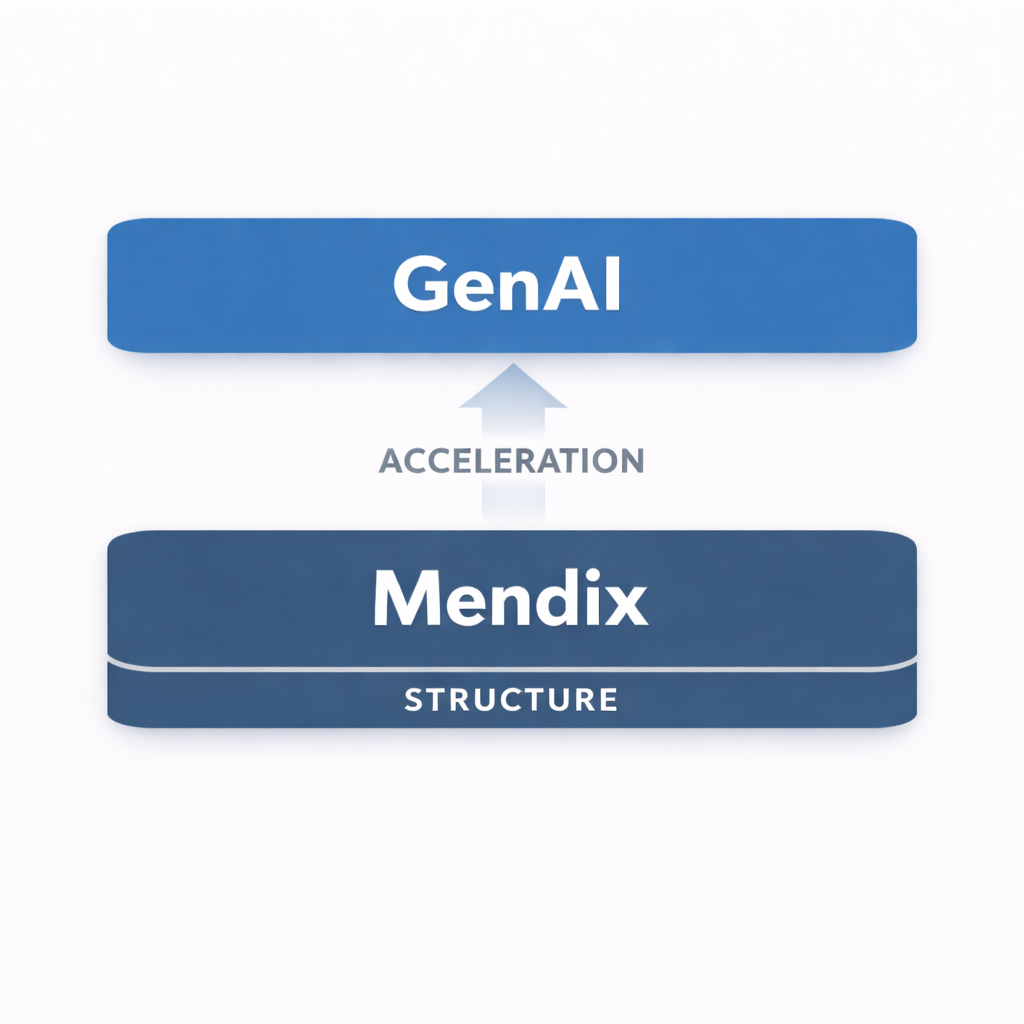

Its model-driven architecture enforces explicit domain models, structured logic flows, embedded role-based security, and governed Dev/Test/Production separation. Mendix does not merely help teams move faster; it provides the structural discipline that makes speed manageable.

AI increases velocity. Mendix ensures structural stability.

Mendix brings structure and predictability to software development that uses GenAI. Consistent technical architecture, reusable components, and an integrated security model.

The Governance Multiplier

Structure alone however is no longer sufficient. As development velocity increases, the surface area of risk expands proportionally. More frequent releases create more opportunities for drift. Greater variation increases the likelihood of inconsistency.

In this environment, governance cannot remain documentary. It cannot rely on policy documents, periodic audits, or manual evidence collection.

Governance must become operational.

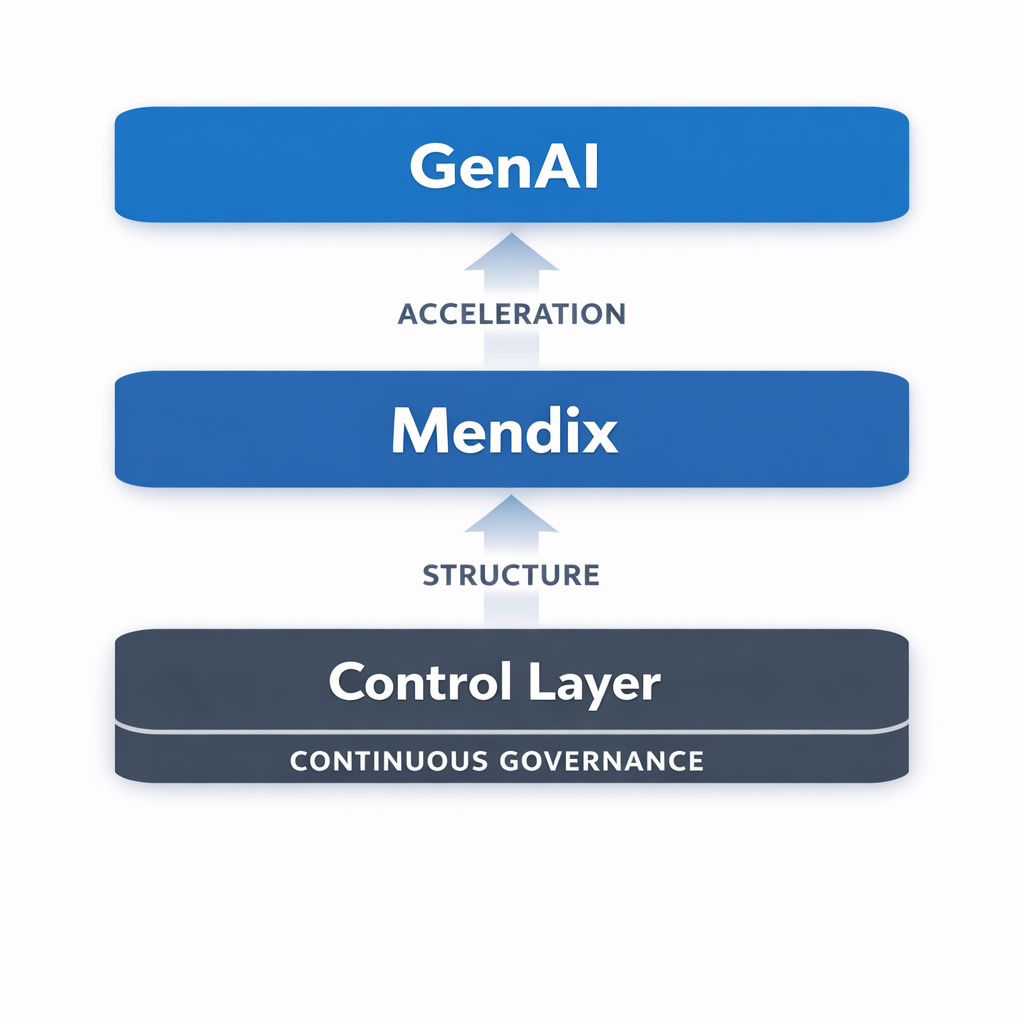

A control-first approach begins by defining what must be true—for security, quality, maintainability, and compliance. Each requirement is formalized as a control. Each control is mapped to measurable validation mechanisms such as policy checks and audit events. Coverage is explicitly defined. Evidence is generated continuously. Deviations trigger structured remediation.

This reframes the central question.

When AI generates new functionality, it is not sufficient to ask whether it works or if you created it faster than with low-code alone. The more important question is whether it remains within defined control boundaries. Is it safe? Is it maintainable? And did it get produced in a compliant manner?

If that cannot be answered in real time, governance is reactive by definition, and you won’t know the answer until it’s too late or too costly to respond.

Adding a governance layer to your technology stack ensures that as you scale, control scales with you. Control is not an afterthought, it is a continuoous part of the application development lifecycle.

What This Means at Scale

Consider a Mendix portfolio of 80 applications developed by multiple teams. AI-assisted development increases throughput across the landscape.

Can you immediately determine which applications fully satisfy your security standards? Can you demonstrate compliance per environment without assembling screenshots before an audit? Can you detect architectural drift and technical debt before it becomes systemic risk?

If governance requires preparation, it is not continuous, and you are not in control.

A control-first governance model embeds evaluation directly into the lifecycle. Applications are continuously assessed against defined controls. Coverage is explicit—full, partial, or mapped. Evidence is produced automatically. Deviations surface immediately.

Acceleration, gained through low-code or GenAI, becomes sustainable because control scales with it.

The Real Question

Generative AI is not replacing structured platforms.

It is increasing the operational cost and risk of functioning without them.

Mendix provides the architectural foundation required for enterprise-scale development and predictable use of GenAI. While continuous, enforced control ensures that this foundation remains secure, maintainable, and compliant as development velocity increases.

The conversation is no longer whether AI can build applications faster than low-code.

The conversation is whether we can control what it builds.

In the coming weeks I will be exploring this topic further — starting with what continuous compliance actually looks like in a Mendix landscape with dozens of applications.

Because defining controls is one thing.

Operating them at scale is another.

— Andrew Whalen

Founder, Blue Storm

Recent Comments