Most quality problems do not begin as mistakes.

They begin as shortcuts.

A similar integration is implemented slightly differently.

A naming convention is ignored “just this once.”

A reusable module is bypassed to save time.

A complex microflow grows instead of being refactored.

Nothing breaks.

The application works.

But over time, small deviations accumulate.

And accumulation becomes friction.

The Gradual Nature of Technical Debt

Technical debt in Mendix rarely appears as catastrophic failure.

It appears as hesitation.

A developer opens a model and needs longer to understand it.

Impact analysis becomes uncertain.

Changes require broader regression testing than expected.

Refactoring feels risky.

These signals indicate structural drift.

Not because the platform failed.

Not because developers lack skill.

But because variation increases without continuous validation.

When architectural guidelines are informal, consistency depends on memory and discipline alone.

At scale, that is fragile.

The traditional manual model for enforcing architectural and development standards must be replaced with an enforcement model that fits the high pace of development expected from low code or AI-powered development teams.

What Maintainability Really Means

Maintainability is not about perfection.

It is about predictability.

Can another developer understand your model quickly?

Are integration patterns consistent across applications?

Do naming conventions communicate intent clearly?

Are dependencies transparent and intentional?

At portfolio scale, maintainability becomes systemic.

It includes:

- Consistent domain modeling patterns

- Controlled module reuse

- Observable model complexity

- Standardized integration architecture

- Clear separation of concerns

Without explicit enforcement, even strong teams drift over time.

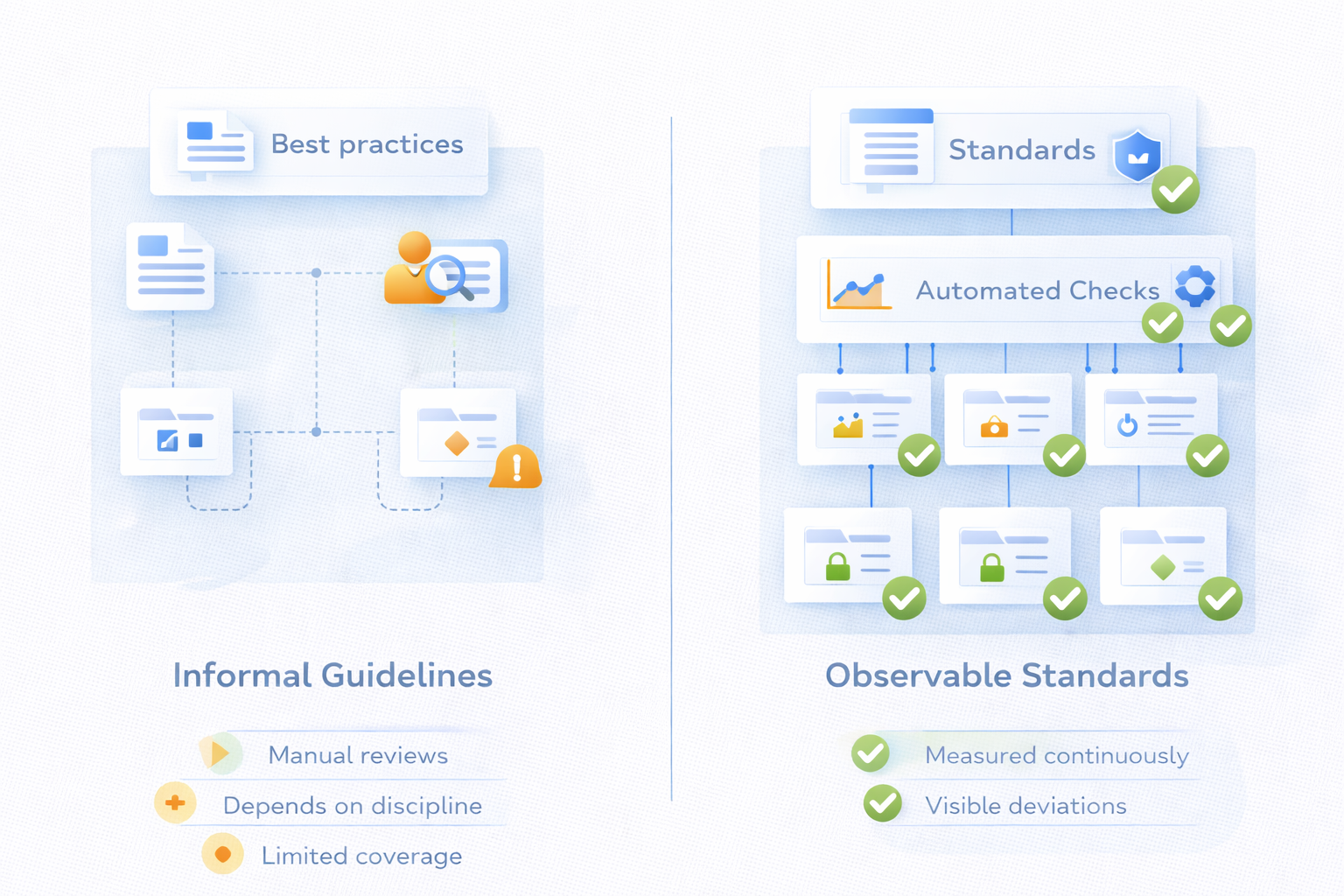

From Guidelines to Observable Standards

Most organizations have architectural principles.

They are documented.

They are explained during onboarding.

They are discussed during reviews.

But documentation does not prevent deviation.

Quality becomes sustainable only when expectations are technically observable.

For example:

- Model complexity thresholds can be measured automatically.

- Deprecated components can be detected across the portfolio.

- Naming conventions can be validated structurally.

- Reuse standards can be monitored.

- Dependency patterns can be analyzed continuously.

When quality standards become measurable, drift becomes visible early.

And early visibility prevents compounding debt.

As architectural compliance and development best practices move from periodic peer reviews to state-based onserveable standards, it aligns better with engineering, becoming an embedded and automated part of the process

The Developer Experience

For developers, structural inconsistency creates daily friction.

You spend time understanding patterns that differ unnecessarily.

You duplicate logic because reuse is unclear.

You hesitate before refactoring because dependencies are opaque.

This increases cognitive load.

It reduces flow.

When standards are embedded into the lifecycle, the experience changes.

If a complexity threshold is exceeded, you see it immediately.

If a module deviates from reuse guidelines, it is observable.

If architectural patterns drift, the deviation is explicit.

You correct structure at the moment it begins to diverge.

Not years later during modernization.

Craftsmanship at Scale

High-quality engineering is not only about delivering working functionality.

It is about leaving systems in a state that others can evolve safely.

Craftsmanship includes:

Clarity of structure.

Consistency of patterns.

Intentional reuse.

Controlled growth of complexity.

When governance supports these principles continuously, quality stops being aspirational.

It becomes operational.

Developers gain confidence that their work aligns with broader architectural standards.

They do not depend solely on peer review memory.

They operate within observable quality boundaries.

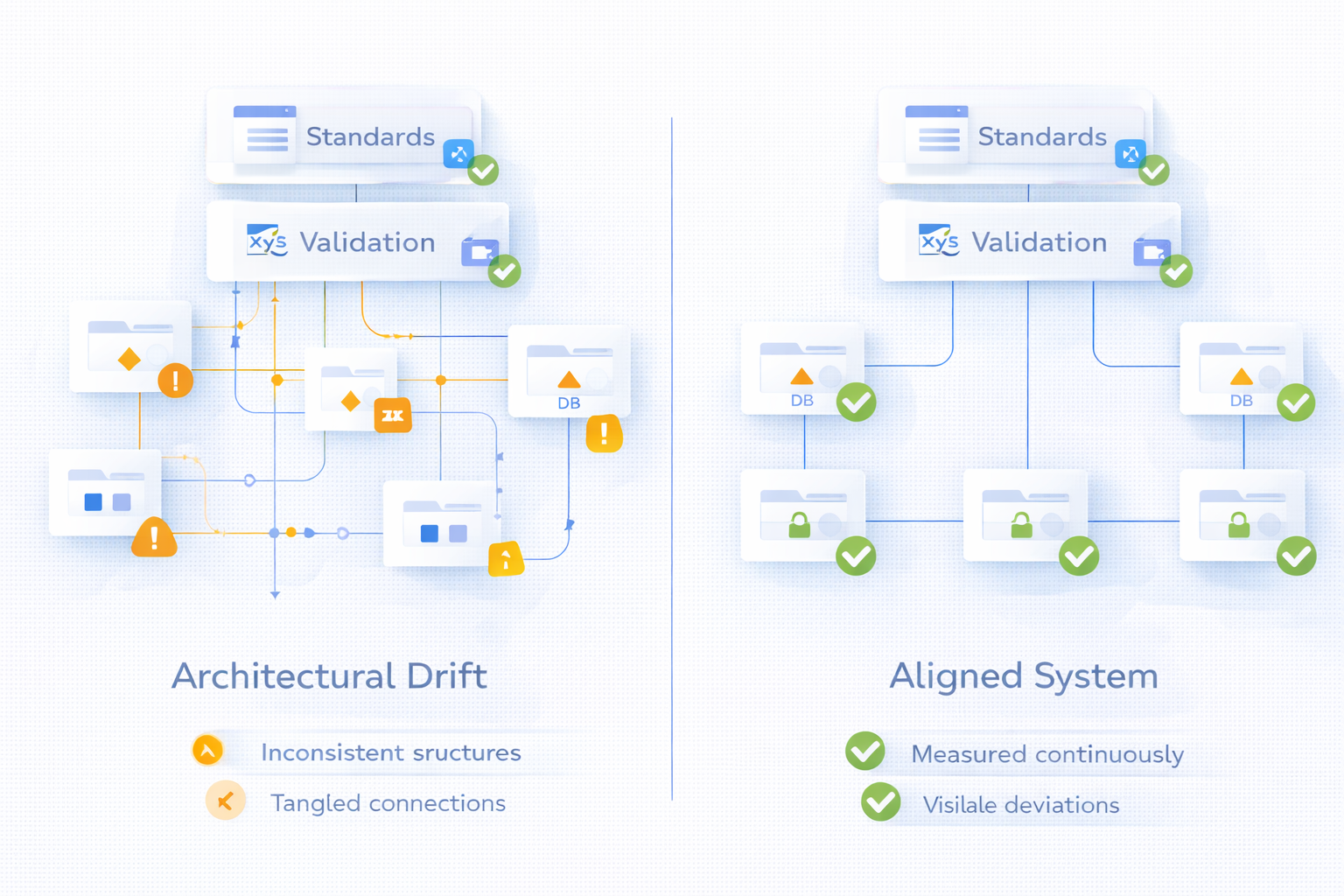

Scaling Across a Portfolio

Now consider a Mendix portfolio of 100 applications built over several years.

Without continuous evaluation, patterns diverge:

Multiple ways to implement the same integration.

Different approaches to entity naming.

Inconsistent separation between core logic and UI logic.

Modules that should have been retired remain in production.

At small scale, this is manageable.

At portfolio scale, it becomes constraint.

Continuous quality validation ensures that every application is assessed against defined architectural standards repeatedly and automatically.

Not during periodic clean-up initiatives.

Continuously.

For developers, this creates shared discipline across teams.

And shared discipline reduces long-term friction.

Because teams operate within the same observable control framework as everyone else, systemic drift from architectural and development standards is not allowed to accumulate.

Why This Matters Now

AI-assisted development accelerates model creation.

Low-code reduces implementation barriers.

Application portfolios expand quickly.

Without structural safeguards, velocity amplifies inconsistency.

If quality governance depends on manual reviews alone, review capacity becomes the bottleneck.

If standards are embedded and observable, growth remains sustainable.

Maintainability scales with delivery.

The Real Question

The question is not whether your teams understand best practices.

The question is whether deviation from those practices can occur silently.

If architectural drift is only discovered during modernization projects, correction is expensive.

If drift is visible immediately, correction is incremental and manageable.

Because building fast is valuable.

Remaining adaptable over time is strategic.

And in regulated environments, sustained adaptability is not optional.

It is part of professional engineering.

— Andrew Whalen

Founder, Blue Storm

Recent Comments