Security work often feels reactive.

A vulnerability scan produces findings.

A penetration test identifies misconfigurations.

An access review uncovers excessive privileges.

A hotfix must be rushed into production.

The cycle is familiar.

Build.

Release.

Scan.

Fix.

For developers, this pattern is inefficient and frustrating.

Because most security issues are not fundamentally complex.

They are structural.

And structural problems should not rely on detection alone.

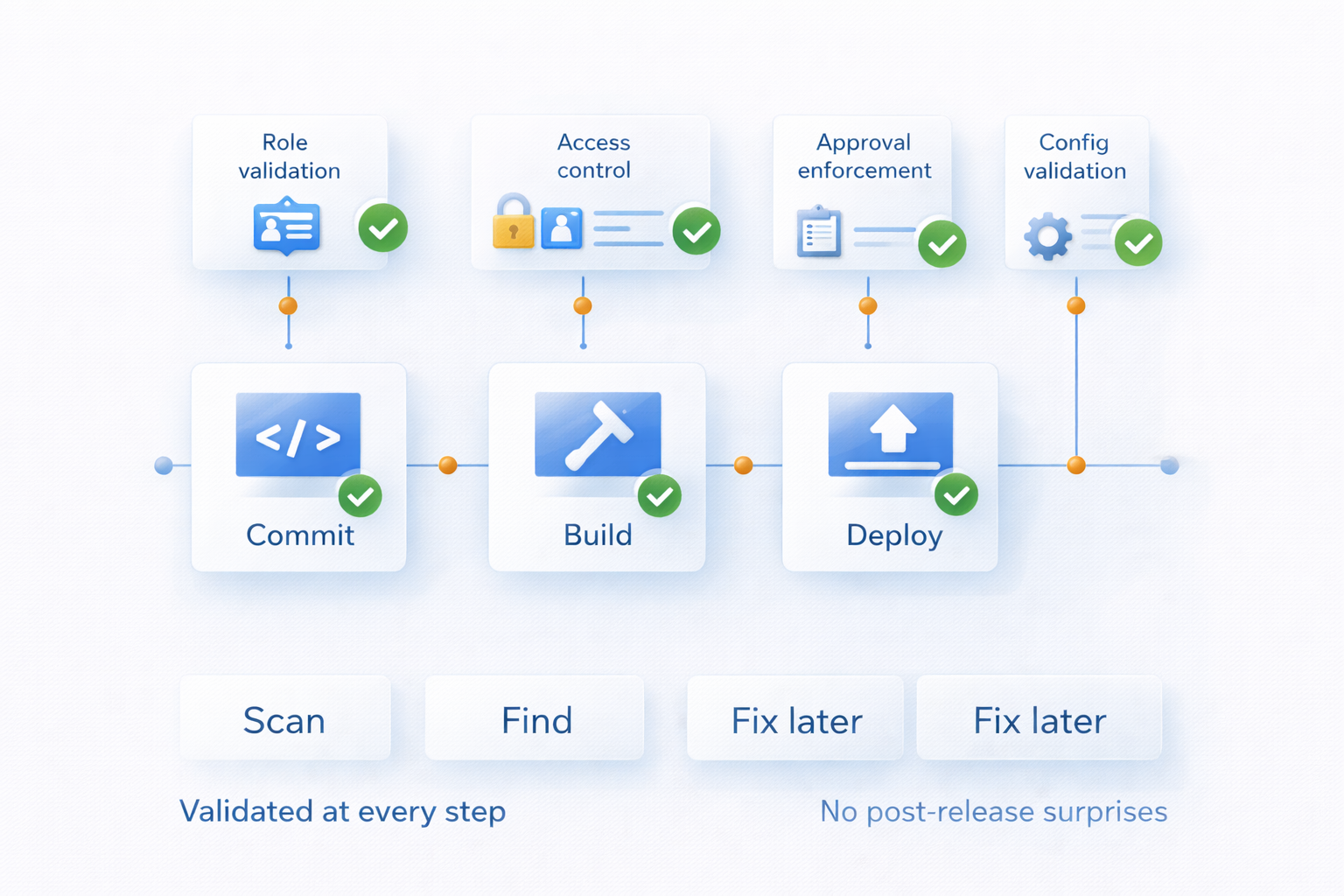

The Detection Model

Traditional security processes are built around discovery.

Periodic scanning tools evaluate applications.

Penetration tests simulate adversarial behavior.

Manual reviews inspect role models and configuration settings.

Findings are logged.

Remediation tickets are created.

Deadlines are set.

This approach assumes that security deviations are occasional and manageable.

But in a Mendix landscape with frequent deployments, environment changes, and multiple teams contributing across a portfolio, deviation is not occasional.

It is statistical.

Every change introduces configuration decisions:

- Which user role has access to this microflow?

- Is this entity exposed appropriately?

- Does this deployment follow the correct approval path?

- Are production-only permissions properly restricted?

If enforcement is not embedded, mistakes will occur.

Not because developers are careless.

But because velocity amplifies variance.

The traditional detection model for security must be replaced with an enforcement model that fits the high pace of development expected from low code or AI-powered development teams.

What Secure by Design Actually Requires

Secure development in Mendix must move beyond guidance.

Documentation and best practices are necessary.

But guidance does not enforce itself.

Secure by design requires that critical security requirements become technically observable and enforceable.

For example:

- Role models are validated against defined least-privilege policies.

- Environment access is checked continuously against segregation rules.

- Deployment flows enforce structured approval chains.

- Security-sensitive configuration changes generate mandatory audit events.

- Deviations trigger visible alerts rather than silent drift.

Security becomes part of the architecture.

Not an afterthought layered on top.

The Developer Experience

When security is external to development, it interrupts flow.

A scan result appears weeks later.

A vulnerability must be traced back to a release.

A configuration drift must be explained retroactively.

This creates context switching.

It forces developers to revisit decisions long after the mental model has shifted.

When enforcement is embedded into the lifecycle, the experience changes.

If a configuration violates policy, it is visible immediately.

If an approval is missing, deployment does not proceed.

If a role exceeds defined boundaries, the deviation is explicit.

You correct issues at the moment of change — not months later.

This reduces rework.

It reduces defensive communication.

It increases clarity.

As security compliance moves from evidence based to state-based, it aligns better with engineering, becoming an embedded and automated part of the process

Security as Engineering Discipline

High-level security discussions often focus on threats.

But from a developer’s perspective, the more important question is structural integrity.

Are the rules of the system explicit?

Are they enforced consistently?

Can they drift without detection?

Secure development becomes craftsmanship when constraints are clear.

You know which patterns are allowed.

You know which roles are acceptable.

You know which flows require structured approval.

You are not guessing.

You are operating within defined boundaries.

And defined boundaries do not limit creativity.

They eliminate avoidable risk.

Scaling Across a Portfolio

Now consider a Mendix landscape of 80 applications.

Multiple teams.

Multiple deployment cadences.

Multiple integration patterns.

Without continuous enforcement, small variations appear:

A temporary privilege granted during testing remains in production.

A deployment bypasses formal review under time pressure.

An integration exposes more data than necessary.

Each deviation may seem minor.

But at portfolio scale, risk compounds.

Secure development at scale requires that every application be evaluated continuously against defined security controls.

Not during annual testing.

Not only after incidents.

Continuously.

For developers, this ensures that everyone operates under the same structural expectations.

Security becomes consistent across teams.

And consistency is what makes collaboration safe.

Because teams operate within the same observable control framework as everyone else, systemic drift from security policies and standards is not allowed to accumulate.

Why This Matters Now

AI-assisted development increases output.

Low-code reduces friction.

Feature throughput accelerates.

As velocity increases, the tolerance for ambiguity decreases.

If security assurance depends on periodic detection, remediation workload grows with release frequency.

If enforcement is embedded into the lifecycle, security scales with delivery.

This is not about slowing teams down.

It is about preventing avoidable rework and reputational risk.

The Real Question

The question is not whether your organization runs vulnerability scans.

The question is whether a Mendix application can drift outside defined security boundaries without immediate visibility.

If it can, security remains reactive.

If it cannot — because controls are embedded, validated, and observable — security becomes structural.

And structural security gives developers something essential in regulated environments:

Predictability.

Reduced remediation noise.

Confidence in every release.

Because shipping features is expected.

Shipping them within enforced security boundaries is professional engineering.

— Andrew Whalen

Founder, Blue Storm

Recent Comments